Researchers can predict what syllables a bird will sing—and when it will sing them—by reading electrical signals in its brain, reports a new study from the University of California San Diego.

Having the ability to predict a bird’s vocal behavior from its brain activity is an early step toward building vocal prostheses for humans who have lost the ability to speak.

“Our work sets the stage for this larger goal,” said Daril Brown, an electrical and computer engineering Ph.D. student at the UC San Diego Jacobs School of Engineering and the first author of the study, which was published Sept. 23 in PLoS Computational Biology. “We’re studying birdsong in a way that will help us get one step closer to engineering a brain machine interface for vocalization and communication.”

The study explores how brain activity in songbirds such as the zebra finch can be used to forecast the bird’s vocal behavior. Songbird vocalizations are of particular interest to researchers because of their similarities to human speech; they are both complex and learned behaviors.

In this work, the researchers implanted silicon electrodes in the brains of male adult zebra finches and recorded the birds’ neural activity while they sang. The researchers studied a specific set of electrical signals called local field potentials. These signals were recorded in the part of the brain that is necessary for the learning and production of song.

What’s special about local field potentials is that they are being used to predict vocal behavior in humans. These signals have so far been heavily studied in human brains, but not in songbird brains.

UC San Diego researchers wanted to fill this gap and see if these same signals in zebra finches could similarly be used to predict vocal behavior. The project is a cross-collaborative effort between engineers and neuroscientists at UC San Diego led by Vikash Gilja, a professor of electrical and computer engineering professor, and Timothy Gentner, a professor of psychology and neurobiology.

“Our motivation for exploring local field potentials was that most of the complementary human work for speech prostheses development has focused on these types of signals,” said Gilja. “In this paper, we show that there are many similarities in this type of signaling between the zebra finch and humans, as well as other primates. With these signals we can start to decode the brain’s intent to generate speech.”

“In the longer term, we want to use the detailed knowledge we are gaining from the songbird brain to develop a communication prosthesis that can improve the quality of life for humans suffering a variety of illnesses and disorders,” said Gentner.

The researchers found that different features of the local field potentials translate into specific syllables of the bird’s song, as well as when the syllables will occur during song.

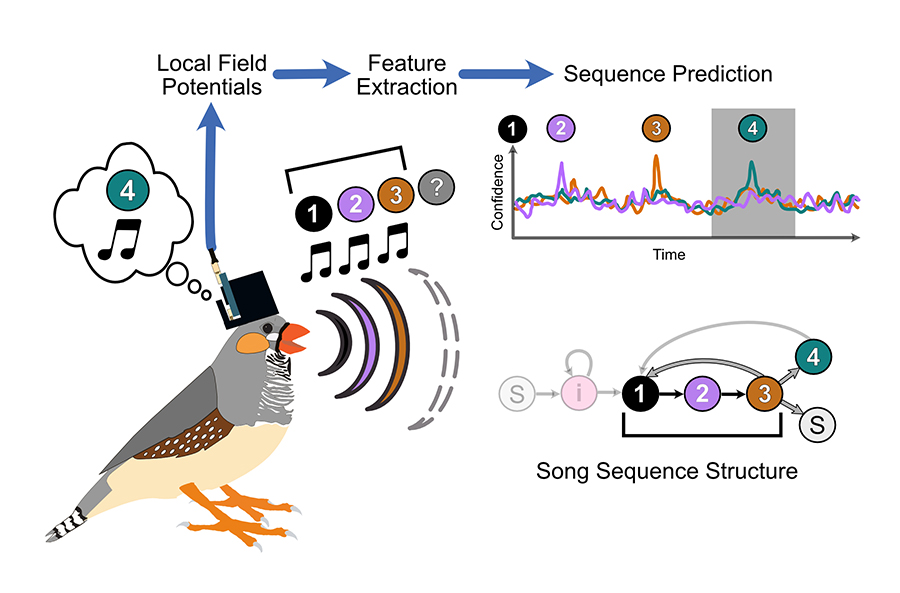

Illustration of the experimental workflow. As a male zebra finch sings his song—which consists of the sequence, “1, 2, 3,”— he thinks about the next syllable he will sing (“4”). The bird’s neural activity is recorded and the local field potentials are extracted. The researchers then analyze features of these signals to predict what syllable the is thinking of singing next.

Illustration of the experimental workflow. As a male zebra finch sings his song—which consists of the sequence, “1, 2, 3,”— he thinks about the next syllable he will sing (“4”). The bird’s neural activity is recorded and the local field potentials are extracted. The researchers then analyze features of these signals to predict what syllable the is thinking of singing next.“Using this system, we’re able to predict with high fidelity the onset of a songbird’s vocal behavior—what sequence the bird is going to sing, and when it is going to sing it,” said Brown.

The researchers can even predict variations in the song sequence, down to the syllable. For example, say the bird’s song is built on a repeating set of syllables, “1, 2, 3, 4,” and every now and then the sequence can change to something like “1, 2, 3, 4, 5,” or “1, 2, 3.” Features in the local field potentials reveal these changes, the researchers found.

“These forms of variation are important for us to test hypothetical speech prostheses, because a human doesn’t just repeat one sentence over and over again,” said Gilja. “It’s exciting that we found parallels in the brain signals that are being recorded and documented in human physiology studies to our study in songbirds.”

Paper: “Local Field Potentials in a Pre-motor Region Predict Learned Vocal Sequences.” Co-authors include Jairo I. Chavez, Derek H. Nguyen, Adam Kadwory, Bradley Voytek and Ezequiel M. Arneodo, UC San Diego.

This work was supported by the National Institutes of Health (R01DC008358, R01DC018055, R01GM134363), the National Science Foundation (BCS-1736028), the Kavli Institute for the Brain and Mind (IRG #2016-004), the Office of Naval Research (MURI N00014-13-1-0205), and the University of California—Historically Black Colleges and Universities Initiative.